How far is Petersburg, AK, from Repulse Bay?

The distance between Repulse Bay (Naujaat Airport) and Petersburg (Petersburg James A. Johnson Airport) is 1629 miles / 2622 kilometers / 1416 nautical miles.

Naujaat Airport – Petersburg James A. Johnson Airport

Search flights

Distance from Repulse Bay to Petersburg

There are several ways to calculate the distance from Repulse Bay to Petersburg. Here are two standard methods:

Vincenty's formula (applied above)- 1629.367 miles

- 2622.212 kilometers

- 1415.881 nautical miles

Vincenty's formula calculates the distance between latitude/longitude points on the earth's surface using an ellipsoidal model of the planet.

Haversine formula- 1623.768 miles

- 2613.202 kilometers

- 1411.016 nautical miles

The haversine formula calculates the distance between latitude/longitude points assuming a spherical earth (great-circle distance – the shortest distance between two points).

How long does it take to fly from Repulse Bay to Petersburg?

The estimated flight time from Naujaat Airport to Petersburg James A. Johnson Airport is 3 hours and 35 minutes.

What is the time difference between Repulse Bay and Petersburg?

Flight carbon footprint between Naujaat Airport (YUT) and Petersburg James A. Johnson Airport (PSG)

On average, flying from Repulse Bay to Petersburg generates about 188 kg of CO2 per passenger, and 188 kilograms equals 414 pounds (lbs). The figures are estimates and include only the CO2 generated by burning jet fuel.

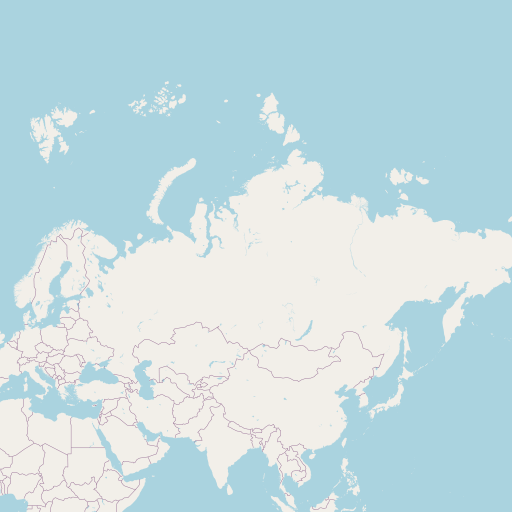

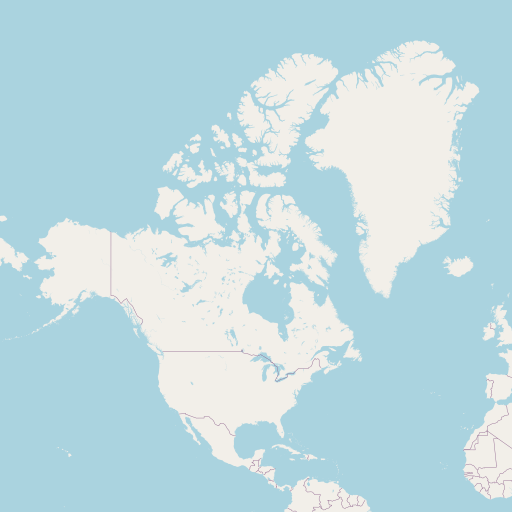

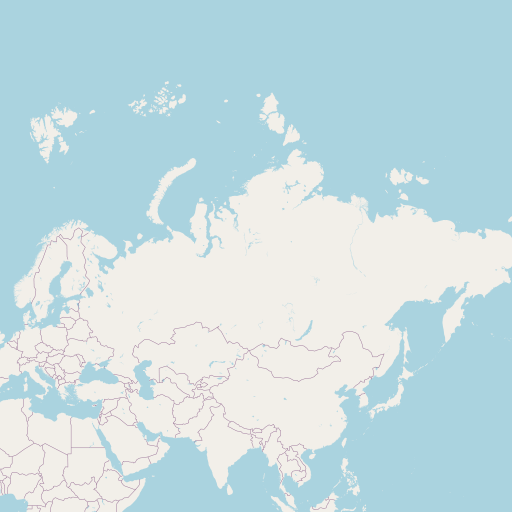

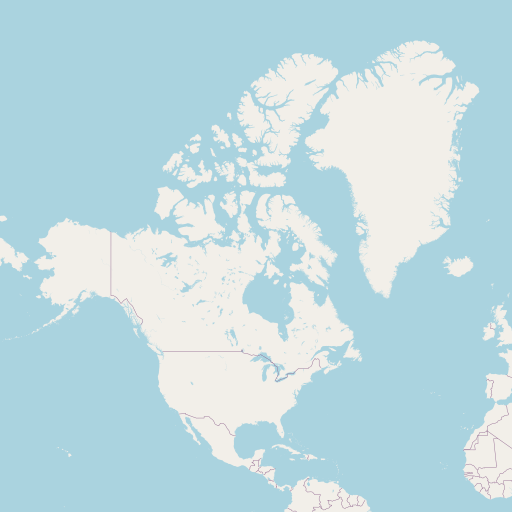

Map of flight path from Repulse Bay to Petersburg

See the map of the shortest flight path between Naujaat Airport (YUT) and Petersburg James A. Johnson Airport (PSG).

Airport information

| Origin | Naujaat Airport |

|---|---|

| City: | Repulse Bay |

| Country: | Canada |

| IATA Code: | YUT |

| ICAO Code: | CYUT |

| Coordinates: | 66°31′17″N, 86°13′28″W |

| Destination | Petersburg James A. Johnson Airport |

|---|---|

| City: | Petersburg, AK |

| Country: | United States |

| IATA Code: | PSG |

| ICAO Code: | PAPG |

| Coordinates: | 56°48′6″N, 132°56′42″W |